Latest Facts

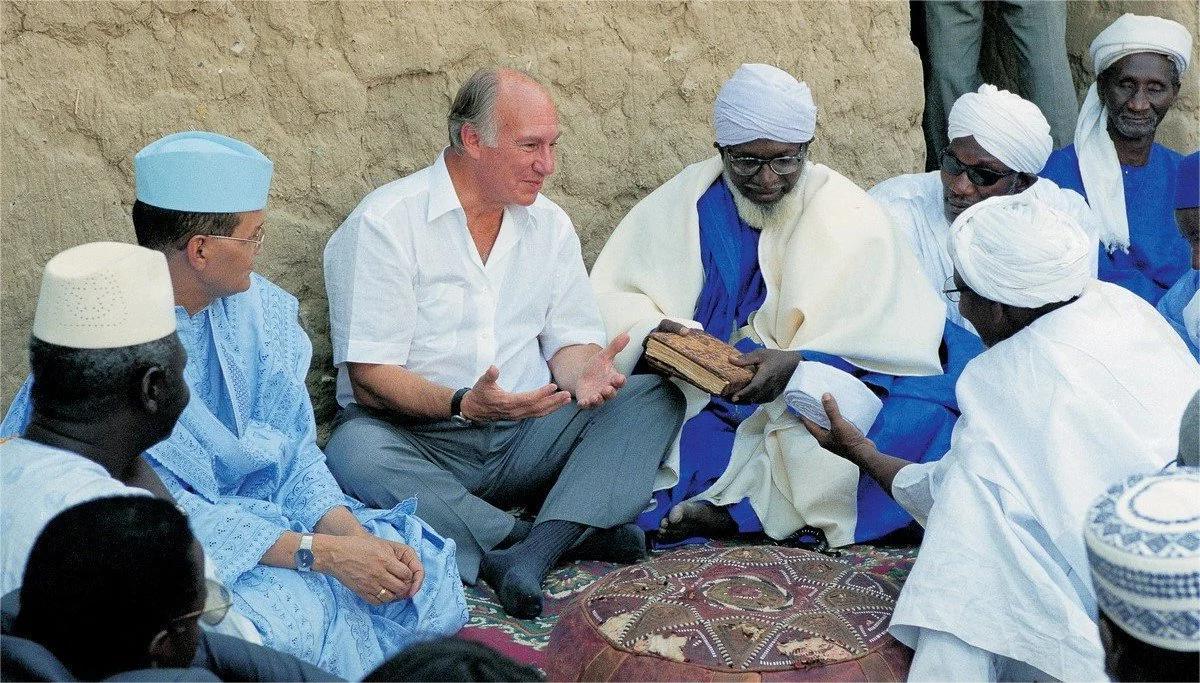

Religion

Religion

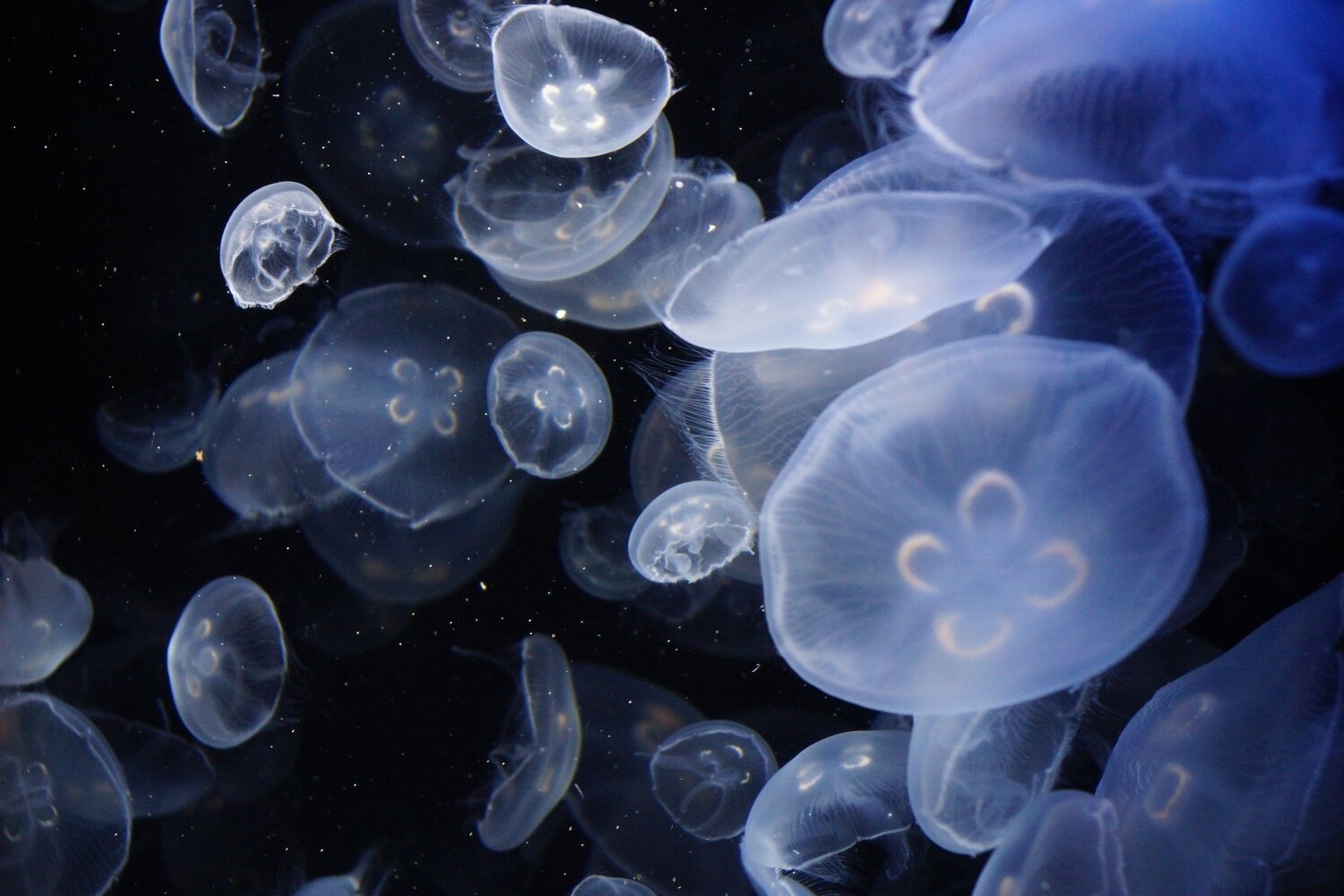

Animals

Religion

Animals

Animals

Animals

Animals

Religion

Animals

Animals

Religion

Animals

Animals

Animals

Animals

Religion

Animals

Animals

Animals

Animals

Animals

Religion

Animals

Animals

Animals

Animals

Animals

Animals

Animals

Animals

Religion

Animals

Animals

Animals

Animals

Animals

Religion

Animals

Animals

Animals

Animals

Animals

Religion

Animals

Animals

Popular Facts

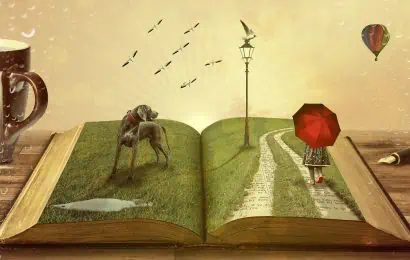

11 Best Animation Movies for Adults

Ever thought animated movies were just for kids? Think again! Animation has grown up, offering a treasure trove of films that cater to adult tastes. From dark comedies to thought-provoking […]