Latest Facts

Transport

Sports

Sports

Sports

Technology

Sports

Sports

Agriculture

People

Health

Sports

Celebrity

Technology

Sports

Games and Toys

Sports

Sports

Technology

People

Games and Toys

Celebrity

Sports

Airlines

Technology

Sports

Sports

Sports

Sports

Sports

Entertainment

Sports

People

Sports

People

Sports

Events

Performing Arts

Sports

Earth Sciences

Sports

Popular Facts

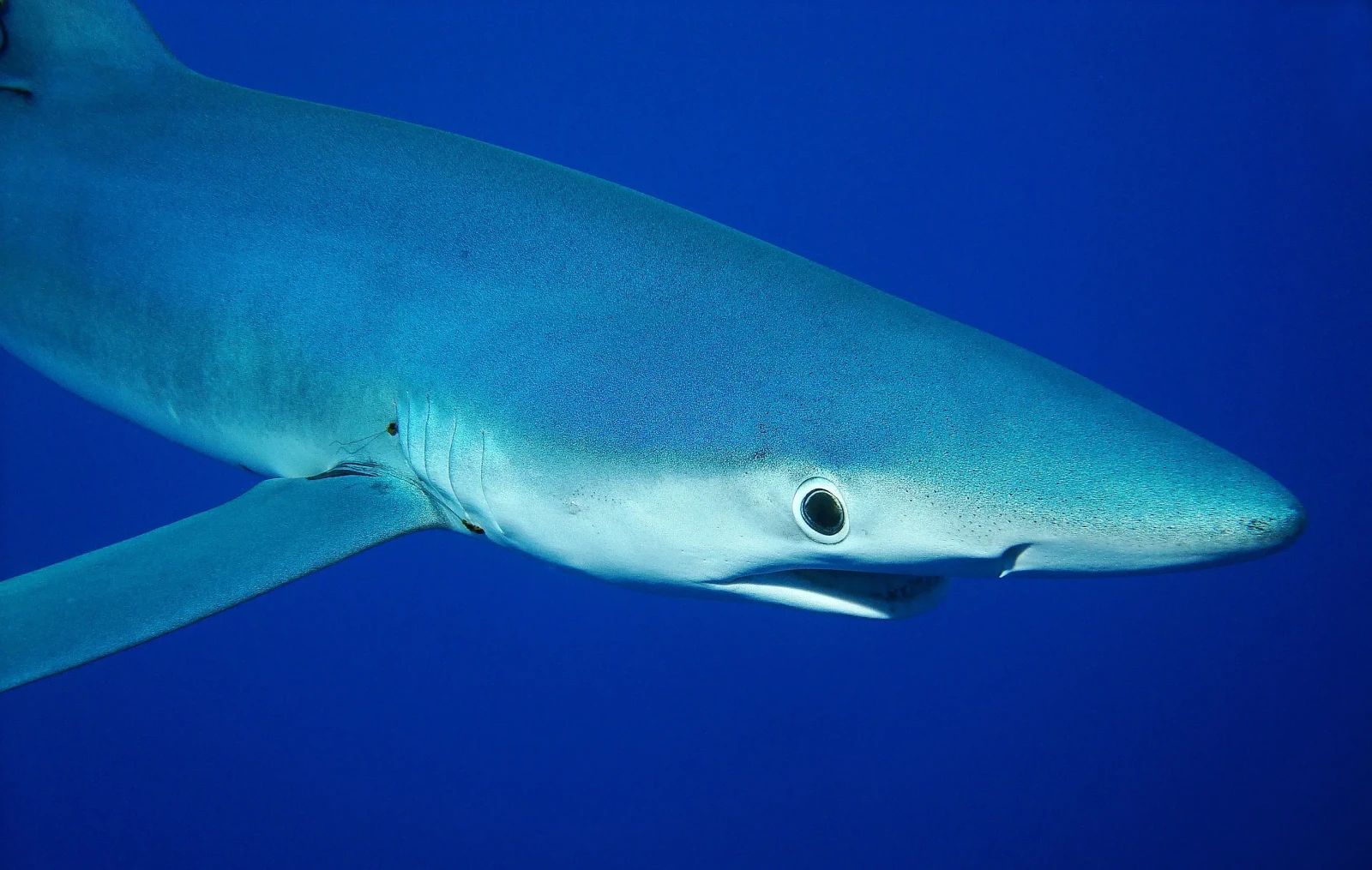

20 Ugliest Animals You Won’t Believe Are Real

Have you ever come across an animal so strange-looking that you had to do a double-take? Nature has a way of surprising us with its bizarre creations, and some creatures […]